Claude Code Leaked Source Explained: What 390,000 Lines of TypeScript Reveal

Anthropic accidentally shipped source maps in their Claude Code npm package, exposing 390,000 lines of TypeScript. Here's what the leaked source reveals about hidden features, code quality, anti-distillation systems, and why Anthropic should open source it.

Anthropic accidentally included source maps in a Claude Code npm release, exposing roughly 390,000 lines of TypeScript source code. The leak reveals hidden features like Dream Mode, Coordinator Mode, and anti-distillation defenses. Theo from t3.gg broke down the entire situation, and this analysis adds engineering context on what the code tells us about how AI coding tools actually work under the hood.

Video Summary and Key Insights

Theo Browne, creator of the T3 stack and one of the most-watched web dev creators on YouTube, covers the full story of the Claude Code source leak on his birthday, no less. The video walks through how source maps work, why Anthropic accidentally shipped them in the npm package, the conspiracy theories that followed, what the leaked code reveals about unreleased features and internal practices, a code quality review (7/10), and pointed advice for how Anthropic should respond. The single most important takeaway: Claude Code ranks last among Opus harnesses on Terminal Bench, and the leaked source shows Anthropic has been copying behaviors from open-source alternatives like OpenCode.

Key Insights:

-

Source maps were the culprit, not a security breach. Anthropic likely added source maps to debug rate limit issues, then accidentally published them in the npm package. This is the second time this has happened.

-

Claude Code scores last place on Terminal Bench for Opus harnesses. Cursor's harness pushed Opus from 77% to 93% on Matt's benchmark. There are 39 harness-model pairs that outscore Claude Code.

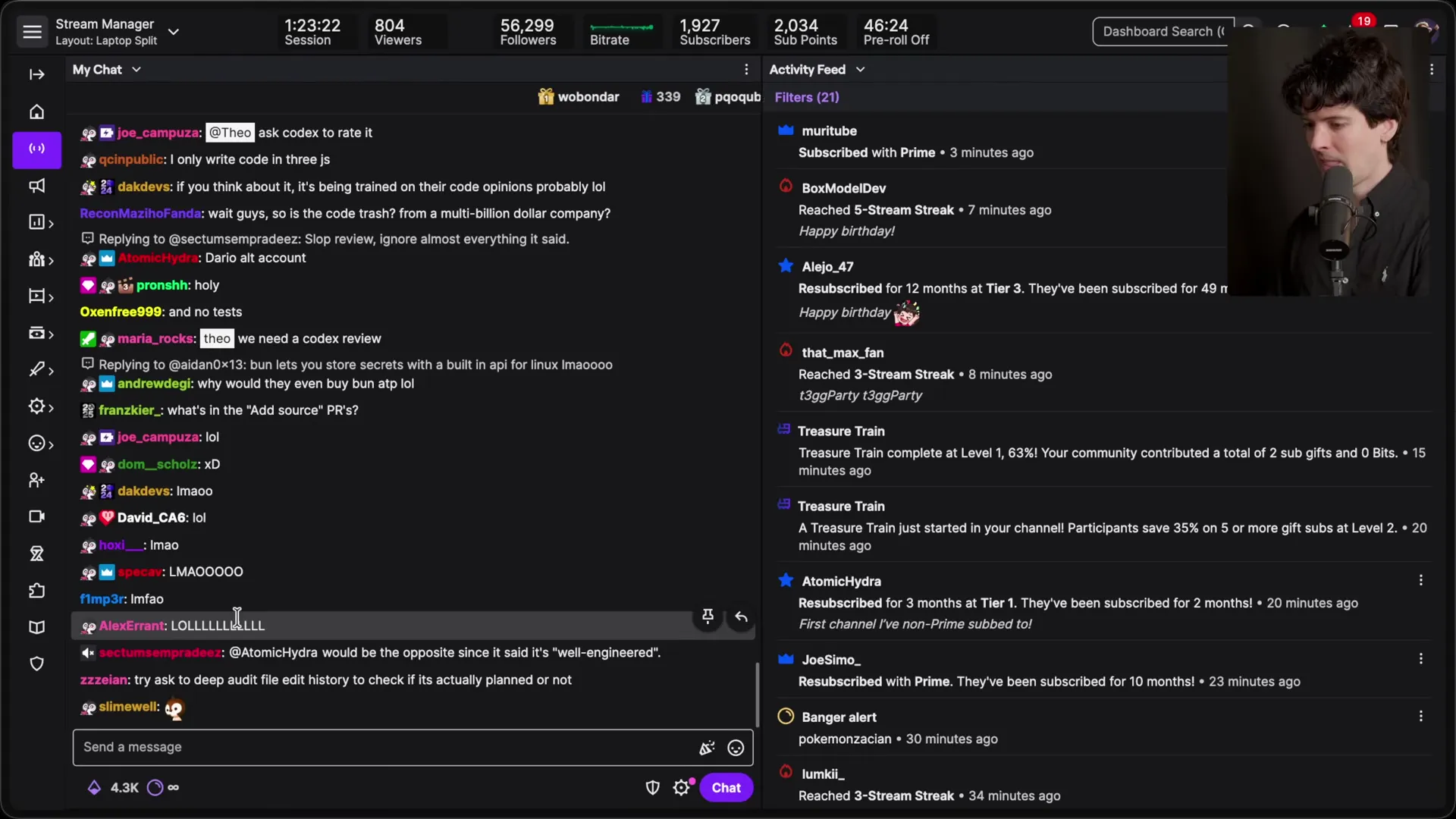

Not only is Claude Code not a good thing for others to reference, the opposite is actually true. One of the funniest things I realized is that when you look for OpenCode in the repo for Claude Code, you will find multiple instances of them referencing OpenCode source in order to match OpenCode's behaviors.

-

Anti-distillation systems inject fake tool calls into conversation histories. Anthropic claims Chinese labs are using Opus interaction data to train competing models, so they built a "distillation resistant mode" that poisons the data with fake tool calls.

-

Coordinator Mode would spin up five or more parallel Claude Code agents per request. Sub-agents share the prompt cache to reduce cost, but Theo suspects the expense is why it hasn't shipped yet.

-

KAIROS is an always-on proactive agent. It runs background heartbeats asking "anything worth doing right now?" and can edit files, push notifications, make pull requests, and respond to PR feedback automatically.

Just open source it. I don't think they have to do this immediately. What I think would be totally reasonable is to give us a roadmap and timeline for when they will be open source.

-

The codebase is 390,000 lines of TypeScript with only 38 instances of

anyacross 500+ files. Code quality was rated 7/10 by Claude itself, with solid type safety but too many god files (5,000+ lines each) and over 1,000 scattered feature flags. -

Anthropic has filed more DMCA takedown requests than possibly any company in history. They're now DMCA-ing people for forking the official Claude Code GitHub repo, which doesn't even contain the source code.

How Did Source Maps Expose Claude Code's Entire Codebase?

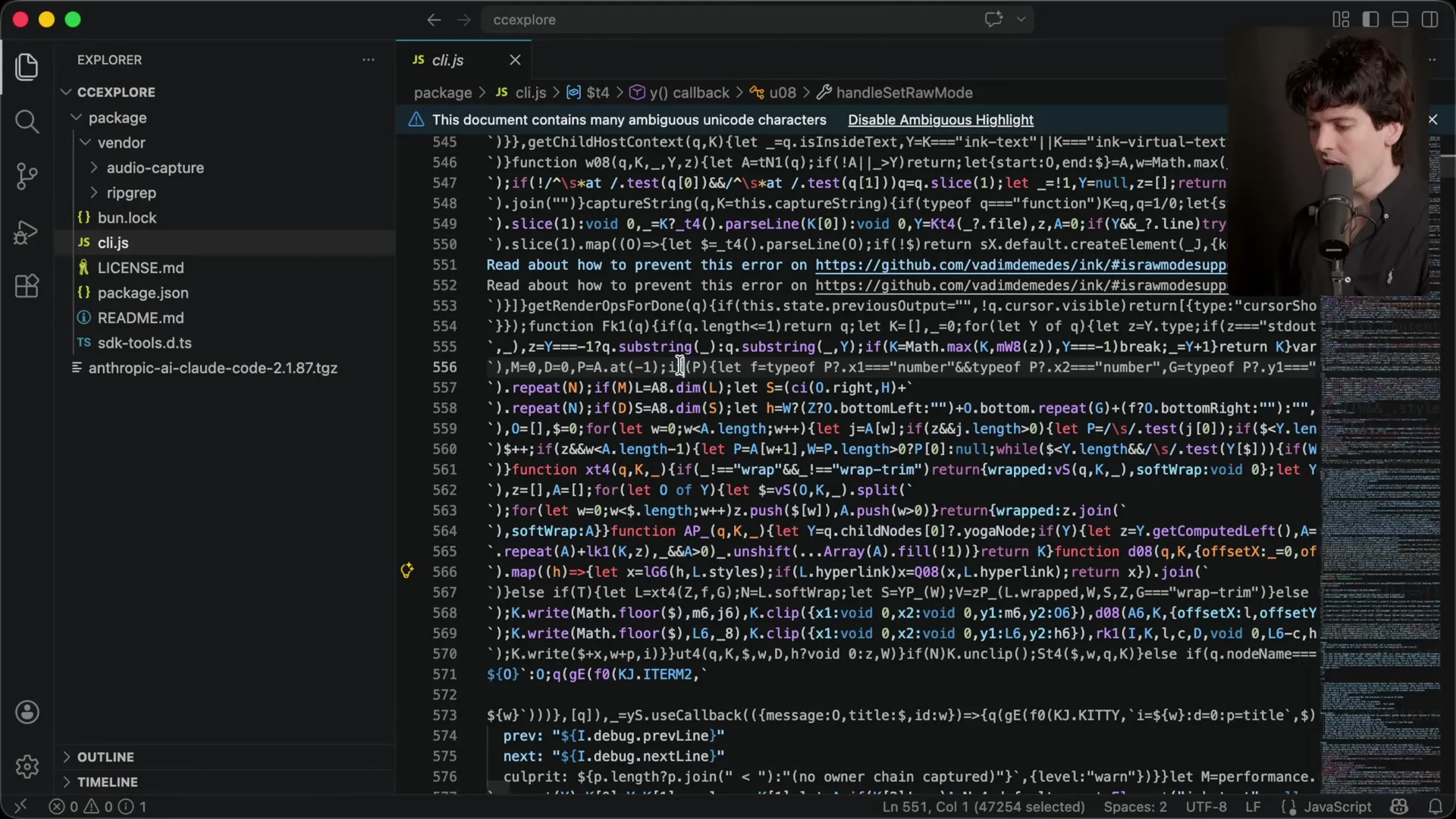

If you've worked with JavaScript bundlers, you know the drill. TypeScript gets compiled to JavaScript, then minified and obfuscated into unreadable blobs. Claude Code's main cli.js file is 13 megabytes of minified JavaScript — essentially one very long line of code that nobody can read.

Source maps solve the debugging problem. They create a mapping between the minified output and the original source, so error messages point to actual file names and line numbers instead of cli.js:1:493847. Tools like Sentry let you upload source maps privately so they never reach end users.

The problem? Anthropic shipped the source maps inside the npm package itself. According to Theo, this is likely connected to the rate limit issues they were investigating:

Multiple employees at Anthropic posted updates about how they're seeing higher rate limit hits more so than expected. It is my assumption that they wanted to get better logs in the production builds of Claude Code in order to see why people were hitting these limits.

This wasn't a one-time mistake either. Anthropic had previously leaked the source in an earlier release, which led to hundreds of DMCA takedown requests on GitHub. The current leak spread so far and fast — the mirrored repo hit 57,000 forks and 54,000 stars — that suppression became impossible.

James's take: As someone who builds and ships npm packages for WebSearchAPI.ai, this is a cautionary tale about CI/CD pipelines. Source maps should be uploaded to your error tracking service and excluded from the published package via .npmignore or the files field in package.json. Anthropic using Claude Code to build Claude Code makes this kind of circular mistake more likely because agents don't always catch build configuration drift.

Was the Claude Code Leak Intentional?

Short answer: no. Theo lays out multiple evidence points:

- The original R2 download link was taken down within hours. Cloudflare removed the hosted zip file before Theo went to bed the night of the leak.

- Anthropic nuked the npm release. They pulled the package version that contained the source maps, which also broke the Claude Agent SDK dependency chain.

- They're DMCA-ing everything. People forking the official Claude Code repo (which contains no source code) are receiving takedown notices.

Anthropic's official statement: "No sensitive customer data or credentials were involved or exposed. This is a release packaging issue caused by a human error, not a security breach."

Theo's reaction to the "human error" framing was worth watching:

Do we think this is really human error, do we think this might have been agent error? Either way, the emphasis on human error and not blaming their own agents and their own models is very funny to me.

The Bun conspiracy also got debunked. There's a known bug where bun serve can accidentally expose source maps in production, but Claude Code uses Bun as a bundler and local runtime, not as a web server. Jared Sumner (Bun's creator, now at Anthropic) confirmed Claude Code doesn't use bun serve. This was the only official comment from an Anthropic employee about the leak.

What Does Terminal Bench Show About Claude Code vs. Competitors?

This was the most surprising part of the video for me. Claude Code is marketed as Anthropic's flagship coding tool, but the benchmark data tells a different story.

| Harness | Model | Score | Rank |

|---|---|---|---|

| Cursor | Opus | 93% | Top tier |

| Codex CLI | GPT-5.4 | 88% | High |

| Gemini CLI | Gemini | 57% | Mid |

| Claude Code | Opus | 77% | Last for Opus |

According to Terminal Bench, Claude Code sits at 39th place overall. When filtered to Opus-only harnesses, it's dead last. That's a 16-percentage-point gap between Claude Code and Cursor running the same Opus model.

James's take: This matters for anyone building Claude Code skills or integrating web search agent capabilities. The harness — the wrapper that manages context, tool calls, and agent orchestration — affects model performance as much as the model itself. If you're investing in Claude's ecosystem, know that the model is excellent but the harness is holding it back relative to what Cursor or open-source alternatives can extract from the same Opus model.

The leaked source also showed Claude Code referencing OpenCode's source to match its behaviors for things like terminal scrolling. As Theo put it: the closed-source options are copying from the open-source ones.

What Hidden Features Did the Leak Reveal?

The leaked source contained several unreleased features, all gated behind GrowthBook feature flags (Anthropic switched from StatSig after OpenAI acquired it):

Dream Mode

Background agents automatically review past sessions and consolidate memories while you're away. The goal: make Claude Code behave more how you ask it to without asking repeatedly.

Coordinator Mode

Spins up multiple parallel workers, each with full tool access but specific instructions. One Claude Code session can spawn five or more sub-agents. Sub-agents share the prompt cache to cut input token costs. The shared cache works by keeping identical context up to the branching point, so you only pay for the divergent output tokens.

KAIROS (AFK Mode)

An always-on proactive agent that runs background heartbeats. Every few seconds it receives a prompt: "anything worth doing right now?" It can edit files, push notifications, make pull requests, and respond to PR feedback without you being present.

Ultra Plan and Ultra Review

Ultra Plan runs remote agents for long complex planning tasks. Ultra Review is automated code review using remote agents with billing controls — likely tied to the $25-per-PR code review feature Anthropic announced.

Undercover Mode

A flag for Anthropic employees contributing to external open-source projects. The system prompt includes the instruction "do not blow your cover" so the PR doesn't reveal it was made with Claude Code.

James's take: Coordinator Mode is the one to watch. The agent orchestration patterns we've been tracking (Paperclip, CrewAI, LangGraph) are solving the same multi-agent coordination problem from the outside. Anthropic building it directly into the harness means they can optimize prompt caching at the infrastructure level — something third-party orchestrators can't do. But the economics are brutal: five parallel Opus instances per request. Prompt cache sharing helps, but this likely costs $2-5 per coordinated task at current pricing.

How Does Anti-Distillation Work in Claude Code?

One of the more surprising discoveries was Anthropic's "distillation resistant mode." Distillation is when someone takes conversation histories from a model and uses them to train a competing model to behave similarly.

They're trying to infect that data by sending fake tool calls into the histories, so when you try to use those histories to train, you end up with a bunch of fake data in them that makes it less likely the model behaves.

Anthropic has publicly accused Chinese labs of using Opus interaction data to improve their own models. The defensive strategy: inject plausible-looking but fake tool calls into the conversation history. Anyone scraping these histories for training data would unknowingly incorporate corrupted examples, degrading the trained model's tool-use behavior.

James's take: This is a real concern for anyone building on Claude's web search API or any API-based AI service. If your application stores and replays conversation histories, the anti-distillation system means those stored histories might contain fabricated tool calls that weren't part of the actual interaction. This could affect logging, debugging, and compliance auditing if you're not aware of it.

How Good Is the Actual Code Quality?

Theo asked Claude to rate its own codebase. The score: 7 out of 10. Here's what the analysis found:

| Metric | Result |

|---|---|

| Type safety | Strong — 38 any instances across 500+ files |

| Naming consistency | Good |

| Error handling | Solid |

| Dead code | Clean — few commented-out blocks |

| Async patterns | Modern — 258 .then() chains, zero callback hell |

| Linting | 248 Biome ignore directives |

| Test files | None (likely excluded by source maps) |

| God files | Several with 5,000+ lines |

| Feature flags | 1,000+ references across 250 files |

| Environment variables | Scattered throughout with no centralized management |

The total: approximately 390,000 lines of TypeScript. For comparison, OpenAI's Codex CLI is 515,000 lines of Rust.

Other interesting findings from the source:

- CLAUDE.md gets reinserted on every turn change, not just once at the top. When the model switches from agent to user, the CLAUDE.md content is injected again at the current position in the history to reinforce instructions.

- Five compaction strategies exist for managing context window limits. Compaction (when the context window fills and older messages get summarized) is still a weak point — Theo noted he trusts OpenAI's compaction more.

- Five permission levels cascade: policy, flags, local, project, user. The

claude_settings.jsoncan set always-allowed patterns that downstream settings can't override. - Random ID generation avoids profanity using a curated avoid list. There's also a regex that detects when users swear at Claude Code, feeding into analytics to track user frustration.

- No centralized secret sanitization before logging. Token prefixes are logged in JWT utilities. This explains why users have accidentally leaked environment variables through Claude Code sessions.

The security concern about malicious npm packages is worth flagging. The leaked source references internal workspace packages that don't exist on npm. Someone registered those package names with disposable emails. Anyone cloning and building the leaked source without caution could install malicious packages instead of the intended workspace dependencies.

What Should Anthropic Do About the Leak?

Theo had four specific recommendations, and I think each one is right:

1. Open source it. Not today, not this week, but commit to a timeline. Spend a month or two cleaning up the codebase, purging the commit history, and restructuring the repo. Then ship it. The "secret sauce" argument is dead — the code is out there, and it turns out the sauce isn't that secret.

2. Beat the leakers with better information. Right now, third-party analysis (including Claude's own interpretation of its code) is the primary source of information. Half of what's being shared is probably wrong. Anthropic should go through each leaked feature and explain it directly.

3. Let the engineers be human about it. Theo contrasted Anthropic's lawyerly silence with OpenAI's playful engagement. When Kevin from TanStack found a CSS overflow bug in t3 code, Jason from OpenAI made a self-deprecating joke about frontend model quality. That kind of authentic interaction matters more than press releases.

You built this reputation of the cool guy. You have to be the cool guy right now. If you're going to keep pretending you're the humans of the bunch, you gotta be the humans right now.

4. Stop the DMCA barrage. Filing takedowns against people forking a repo that doesn't contain source code is counterproductive. It makes Anthropic look like "lawyers are running your company, not developers."

James's take: From an infrastructure perspective, open-sourcing Claude Code would benefit the entire ecosystem. When we build web search agent skills, we're working against incomplete docs and testing locally to see if things even work. The CLAUDE.md reinsertion pattern, the permission cascade, the compaction strategies — knowing how these work from source rather than guessing from behavior would save every Claude Code developer significant time. Anthropic's competitors already have open-source CLIs. The leak removed the last strategic argument for keeping it closed.

Frequently Asked Questions

What caused the Claude Code source code leak?

Anthropic accidentally included source maps in a Claude Code npm package release. Source maps are files that link minified JavaScript back to original TypeScript source code. According to Theo Browne, Anthropic was likely investigating rate limit issues and added source maps for better debugging, then forgot to exclude them from the published package. This is the second time Anthropic has accidentally shipped source maps in a Claude Code release.

Is the Claude Code leak a security breach?

Anthropic stated: "No sensitive customer data or credentials were involved or exposed. This is a release packaging issue caused by a human error, not a security breach." The leaked code is the Claude Code CLI tool source, not user data, API keys, or model weights. However, the source did reveal that there's no centralized secret sanitization before logging, which could be a concern for users running Claude Code in sensitive environments.

How big is Claude Code's source code?

The leaked Claude Code source contains approximately 390,000 lines of TypeScript across 500+ files. For comparison, OpenAI's open-source Codex CLI is about 515,000 lines of Rust. The bundled cli.js file that users normally see is 13 megabytes of minified JavaScript.

What hidden features were found in the Claude Code leak?

The leaked source revealed several unreleased features gated behind GrowthBook feature flags: Dream Mode (automatic memory consolidation from past sessions), Coordinator Mode (parallel multi-agent execution), KAIROS (an always-on proactive agent that acts without user input), Ultra Plan and Ultra Review (remote agent execution for planning and code review), and an "undercover" flag for Anthropic employees contributing to external projects.

Is Claude Code open source now?

No. Claude Code is not officially open source. While the source code has been widely distributed through the leak, Anthropic has been aggressively filing DMCA takedown requests. The official Claude Code GitHub repository contains plugins and skills but not the CLI source code itself. Theo Browne's recommendation is that Anthropic should commit to an open-source timeline within one to two months.

How does Claude Code compare to other coding harnesses?

On Terminal Bench, Claude Code ranks 39th overall and last place among Opus-specific harnesses. The same Opus model scores 77% through Claude Code but 93% through Cursor's harness — a 16-percentage-point gap. Open-source alternatives like OpenCode, Gemini CLI, and Codex CLI generally outperform Claude Code as harnesses, and the leaked source shows Claude Code referencing OpenCode's source code to match its behaviors.

What is the anti-distillation system in Claude Code?

Claude Code includes a "distillation resistant mode" that injects fake tool calls into conversation histories. Anthropic has accused some labs of scraping Opus interaction data to train competing models. The defense: if someone uses these poisoned histories for training, their model would learn from fabricated examples, degrading its tool-use accuracy.

Is it safe to build and run the leaked Claude Code source?

Be extremely careful. The leaked source references internal workspace packages that don't exist on npm. Someone registered those package names with disposable email addresses for likely malicious purposes. Building the source without recreating the proper workspace dependencies could result in installing malicious packages. Ben (who helped Theo get it running) had to strategically recreate these dependencies to build it safely.

Key Takeaways

- Source maps were the cause: Anthropic accidentally published source maps in the Claude Code npm package, exposing ~390,000 lines of TypeScript. This is the second time this has happened.

- Claude Code underperforms as a harness: Terminal Bench data shows Opus scores 77% through Claude Code but 93% through Cursor. The harness matters as much as the model.

- Hidden features reveal Anthropic's roadmap: Dream Mode, Coordinator Mode, KAIROS, and anti-distillation defenses show where Claude Code is headed, including always-on proactive agents and multi-agent orchestration.

- Code quality is decent but not great: 7/10 with strong type safety (38

anyinstances) but too many god files, scattered feature flags, and no centralized environment variable management. - The open-source argument is settled: With the code already widely distributed, Anthropic's strategic reasons for keeping Claude Code closed source no longer hold. A timeline for open-sourcing is the best path forward.

I spent six hours watching, rewatching, and analyzing BREAKING: Claude Code source leaked by Theo - t3.gg (162,000+ views, published April 1, 2026) to pull out the engineering insights that matter most if you're building on Claude's ecosystem. The editorial commentary throughout reflects my own experience shipping developer tools at WebSearchAPI.ai.